AI terms explained for busy teams

Forty common ideas appear again and again in everyday AI work. This summary clusters them into plain language groups, adds quick choices for real projects, and includes prompts your team can reuse.

- Bias - When an AI unfairly prefers certain answers, often because of the data it was trained on.

- Label - A tag or answer given to data so AI knows what it is.

- Model - The final program that can do tasks after learning from data.

- Training - The process where AI learns from examples to get better at its job.

- Chatbot - A computer program that talks to people in text or voice.

- Dataset - A big collection of information that AI learns from.

- Algorithm - Step-by-step instructions for solving a problem.

- Token - Words or pieces of words AI uses to read and write text.

- Overfitting - When AI learns the training data too well and can't handle new, different answers.

- AI Agent - A software that does jobs for you on its own.

- AI Ethics - Making sure AI is doing things that are right and fair to everyone.

- Explainability - How easily people can understand why AI made a certain decision.

- Inference - When an AI uses what it has learned to answer new questions.

- Turing Test - A test to see if a computer can chat so well that people can't tell the difference.

- Prompt - The text or question you give to an AI to get a response.

- Fine-Tuning - Training an AI again with special data to make it better at specific tasks.

- Generative AI - AI that can make new stuff, like pictures, writing, or music.

- AI Automation - Using AI to make tasks happen by themselves, without people doing them.

- Neural Network - Computer programs built a little like the human brain.

- Computer Vision - AI that helps computers understand images or video.

- Transfer Learning - Using an AI trained on one job to help with a new, different job.

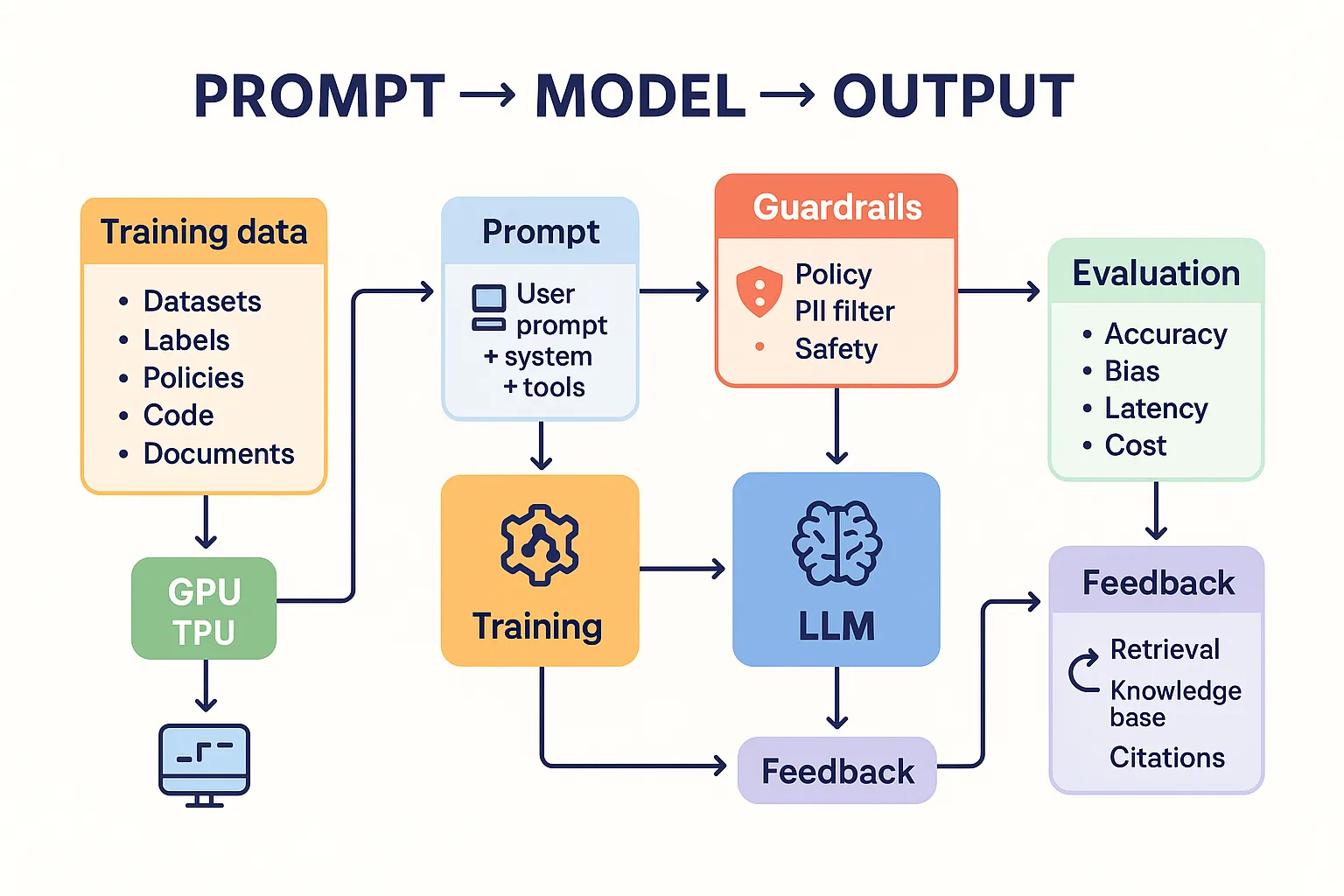

- Guardrails (in AI) - Built-in checks to stop AI from making mistakes or causing harm.

- Open Source AI - AI whose design is shared with everyone, so anyone can use or change it.

- Deep Learning - AI that learns using brain-like structures called neural networks.

- Reinforcement Learning - AI learns by trying things and getting rewards for good actions.

- Hallucination (in AI) - When AI makes up stuff that isn’t true or isn’t based on facts.

- Zero-shot Learning - AI does a new task it wasn’t directly taught just by understanding instructions.

- Speech Recognition - AI that turns spoken words into written text.

- Supervised Learning - AI learns from examples that are labeled with the right answers.

- Model Context Protocol - Rules for how AIs talk to each other and share information they have.

- Machine Learning - A way for computers to get better at tasks by looking at lots of examples.

- AI (Artificial Intelligence) - Tech that makes computers act smart, like humans do.

- Unsupervised Learning - AI finds patterns in data that is not labeled.

- LLM (Large Language Model) - An AI model that understands and writes language, trained on lots of text.

- ASI (Artificial Superintelligence) - An AI even smarter than the smartest human ever.

- GPU (Graphics Processing Unit) - Special computer chips that help AI and run big AI models faster.

- Natural Language Processing (NLP) - AI that understands and works with human language.

- AGI (Artificial General Intelligence) - A super-smart AI that can learn anything like a human.

- GPT (Generative Pretrained Transformer) - A famous type of AI that writes text like a human.

- API (Application Programming Interface) - A way for different programs to talk to each other, often to use AI features.

- Most work fits three buckets data and setup model choice and evaluation safety and delivery.

- Pick the simplest approach that meets the acceptance test you define before building.

- Guardrails and clear ownership reduce rework more than any model tweak.

Foundations that show up in every project

Think of an algorithm as a recipe and a model as the finished cook who learned the recipe. The cook learned through training on a dataset. When you ask for a result you are doing inference. Text is broken into tokens and the request you send is a prompt. A chatbot is just a user interface wrapped around that flow.

- Bias and overfitting warn you when results lean unfair or memorize examples.

- Explainability helps humans see why the system decided something.

- Turing test is a thought exercise not an acceptance test for production.

- Open source options improve control and cost while closed models can reduce setup time.

- Algorithm versus model

- Algorithm is the method a model uses to learn or decide. Model is the thing that learned.

- Training versus inference

- Training happens before launch and inference happens at request time.

- Token versus word

- Tokens are sub word chunks that models use to process text efficiently.

Define what good looks like before you pick a model then measure against that definition.

Learning styles and when to use them

Different problems suggest different learning approaches. Use the smallest change that solves today’s task then iterate.

Supervised and friends

- Supervised learning needs labeled examples and is great for classification and scoring.

- Unsupervised learning finds patterns where labels do not exist.

- Reinforcement learning learns by trial results and rewards. Useful for policy and ranking.

- Transfer learning starts from a pretrained model and adapts to a new task.

- Fine tuning adjusts weights for a focused specialty when prompts alone cannot hold style or facts.

- Zero shot learning lets a capable model follow instructions for tasks it never saw explicitly.

Modalities and scale

- Neural network is the general architecture used by modern models.

- Deep learning uses many layers which improves perception tasks like computer vision and speech recognition.

- LLM is a text focused model type. GPT is a specific family. NLP is the field around using them.

- GPU accelerates both training and inference for big models.

- AGI and ASI are future oriented ideas not delivery milestones for today’s roadmap.

Shipping with safety and reliability

Guardrails and process reduce risk and support compliance. Plan them early and treat them as features.

- Guardrails enforce policy and block unsafe outputs.

- Hallucination is confident wrongness. Reduce with retrieval and clear grounding.

- Model context protocol and similar patterns help systems exchange state and tools consistently.

- AI ethics requires clear rules for data use consent access and appeal routes.

- AI agent automation should include ownership and audit so people can intervene.

- Write an acceptance checklist accuracy safety latency cost and owner.

- Log prompts and decisions with privacy in mind.

- Add human review for high impact outputs.

Starter workflow you can try today

This lightweight recipe turns the glossary into action for content teams or support teams. It assumes a capable LLM and a small knowledge base.

Setup steps

- Collect ten to twenty approved examples and label them with desired attributes.

- Decide thresholds for success and how you will measure them.

- Add guardrails and a human review path for exceptions.

Reusable prompts

Classifier

Task

Decide if the draft contains claims that require a citation.

Answer with one line Yes or No then list the sentences that require sources.

Draft

[Paste text]Grounded writer

Goal

Write a 120 word answer grounded only in the provided excerpts.

Do not invent facts. If missing say Not enough information.

Question

[User question]

Excerpts

[Top three snippets from your knowledge base]Evaluation checklist

Evaluate this output against the acceptance test.

Return a JSON object with pass boolean and notes.

Acceptance

[Your criteria]Need more references and related reading inside your site Search our feed PromptVaults content feed.

Frequently asked questions

Which terms matter most for first projects

Focus on dataset prompt inference guardrails bias and evaluation. These determine quality more than switching among comparable models.When should we fine tune instead of prompt

Fine tune when you need stable style or domain precision at scale and prompts with retrieval still drift. If the task is narrow consider a smaller fine tuned model to cut cost.How do we reduce hallucination

Ground answers in curated excerpts use citations enforce guardrails and reject responses that cannot cite sources. Track failure types and update your knowledge set.What is the quickest safe way to pilot

Pick one use case with clear success criteria route outputs to internal reviewers first add logging and roll out to a small audience with feedback loops.How do agents help

Agents coordinate steps like retrieval drafting and review. They shine when tasks require multiple tools and checks. Keep humans in the loop for judgment calls.Common mistakes to avoid

- Launching without an acceptance test.

- Collecting data without consent or retention policy.

- Overfitting demos then failing on real traffic.

- Ignoring latency and cost until production.