What is Context Engineering?

Context engineering is how you make AI smarter at every step-by managing what it sees, when, and why. Think of it as the architecture behind the prompt.

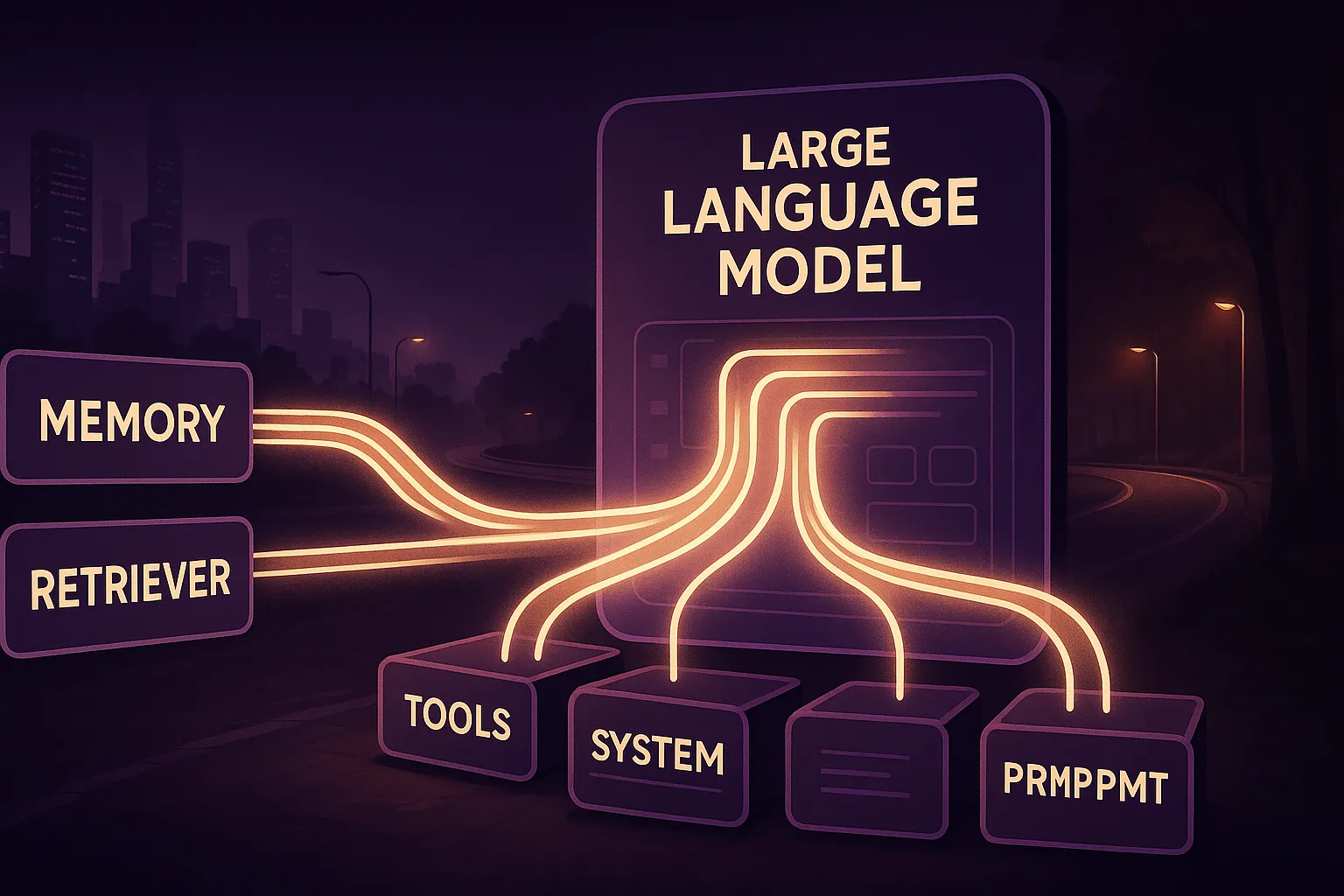

From Prompt to Context Stack

Prompt engineering helped us learn to talk to LLMs. But context engineering goes further-designing full pipelines of memory, tools, documents, and user state that shape an AI's output.

- System prompts - behavioral and tool-level constraints

- Tools - schemas, usage summaries, and validation context

- Memory - chat logs, user profiles, vector database results

- Retriever context - documents, metadata, few-shot examples

This makes the AI consistent, trustworthy, and personalized at scale.

4 Techniques Used in Context Engineering

The most effective frameworks use a blend of these strategies:

- Write - Craft modular system and user prompts

- Select - Dynamically fetch only relevant info

- Compress - Summarize or embed long docs into short chunks

- Isolate - Prevent noisy or off-topic bleed between sources

"Context engineering is the art of filling the window with just the right information for the next step."

- Simon Willison

Why It Matters

Real-world Use Cases

- AI tutors that track lesson history and adjust learning styles

- Support bots using past tickets and live tool results

- Legal agents referencing case law + firm playbooks

Each one uses a form of context routing, memory, and formatting-often with LangChain, LlamaIndex, or vector DBs like Pinecone or Weaviate.

Model Context Protocol (MCP)

The industry is converging around Model Context Protocol (MCP) a JSON-RPC format for declaring tool use, memory, document flows, and context management. It's backed by OpenAI, Anthropic, LangChain, and others.

{

"@type": "mcp/Context",

"tools": ["code-interpreter", "retriever"],

"memory": {

"summary": "User asked about invoice automation"

},

"documents": [

{

"id": "doc_1823",

"source": "crm-logs.csv",

"type": "csv",

"relevance": "high"

}

]

}Protocols like this ensure interoperability and safety across multi-tool agent workflows.

Try This Prompt

Use this in GPT-4, Claude, Gemini, or your own RAG system:

Act as a senior LLM systems designer. Help me create a context engineering plan for an AI assistant that helps [insert domain]. List:

1. Required context sources

2. Retrieval methods

3. Window management strategies

4. Risks or context collisions

5. A JSON-based example of the input structure