Google BlockRank background facts and actions for 2025

This is a plain-English briefing on Google BlockRank with sources verified in late October 2025. It explains what BlockRank is, why it matters to ranking research, what is publicly known, and how teams can prepare without overreacting.

Author Chris Guild |

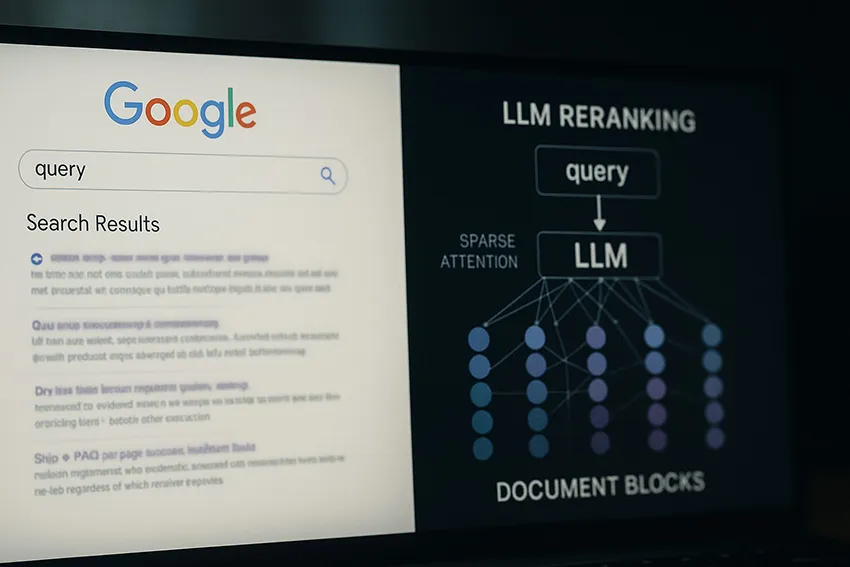

- BlockRank is a research method for in-context ranking that restructures LLM attention over document blocks to gain speed and quality on standard ranking benchmarks.

- As of October 2025 there is no public confirmation that BlockRank runs in Google Search. Treat it as research, not a shipped ranking system.

- Benchmarks reported include BEIR, MS MARCO, and Natural Questions with results competitive to strong rerankers using a 7B model.

What BlockRank is and how it works

BlockRank is a method proposed by Google DeepMind researchers to make in-context ranking with large language models faster and more scalable. The paper analyzes how attention flows when a model reads a query together with multiple candidate documents, then constrains and guides that attention at the block level to emphasize the parts that signal relevance. The paper reports an auxiliary attention loss and structured sparse attention that together reduce wasted cross-document comparisons while preserving the signal between query tokens and relevant document blocks.

Key ideas you should know:

- In-context ranking feeds a short instruction, the search query, and several candidate documents into an LLM and asks it to produce a relevance-ordered list. BlockRank keeps the in-context flow but trims computation by assuming limited cross-document interaction is needed.

- Two empirical patterns steer the design

- Inter-document block sparsity where documents can be treated largely independently for attention during ranking.

- Query-document block relevance where specific query tokens carry most of the retrieval signal.

- Benchmarks include BEIR, MS MARCO, and Natural Questions. Results with a Mistral-7B base match or surpass strong rerankers while cutting inference time at larger candidate counts.

BlockRank in research settings appears aimed at democratizing high-quality semantic ranking by reducing compute costs, which could help smaller teams adopt LLM reranking within retrieval pipelines.

What is publicly confirmed as of 2025

Public sources confirm a peer-review style preprint describing BlockRank and its evaluation. Independent industry coverage summarizes the work and states that there is no claim or evidence that BlockRank currently runs in Google Search or AI Overviews. The coverage also notes a placeholder GitHub reference without released code at the time of publication.

- The arXiv manuscript details the method, hyperparameters, datasets, and efficiency measurements with figure references and appendix notes.

- Industry write-ups highlight the in-context ranking framing and reiterate that deployment in Google Search is not claimed. }

- A public repository reference exists for BlockRank with limited or early materials observed by press at the time.

Useful background for teams tracking search technology changes

- Google algorithm history remains separate from this research line. Keep routine monitoring of official update histories for confirmed ranking changes.

- The 2024 documentation leak discourse is also separate. Treat it as context about signals and systems, not as evidence that BlockRank is deployed.

“The research paper says nothing about it being used in a live environment.”

Why this matters for SEO AIO GEO AEO SXO

BlockRank sits in the reranking layer that often follows first-stage retrieval. Many modern answer engines and enterprise search stacks already combine sparse or dense retrieval with a precise reranker before synthesis. A method that keeps quality but lowers compute means more teams can afford listwise reranking, which raises the bar for relevance.

Practical reading on ranking context

- Expect continued use of retrieval plus reranking plus synthesis patterns in AI answers.

- Content that aligns to explicit questions, carries clean evidence, and presents extractable passages tends to rise when rerankers score on usefulness for the query.

- Clear sectioning, anchors, and concise answers often help both traditional ranking and AI answer inclusion for GEO and AEO work.

None of this changes the fundamentals that still move results in 2025 articles and industry summaries. Helpful content quality, intent match, performance signals, and trust indicators continue to correlate with winning pages while systems evolve.

Action checklist for teams tracking BlockRank

- Structure pages with short intros, direct answers, and evidence blocks that a reranker can score quickly for a single query. Add precise headings and passage-level clarity.

- Expose clean passages with semantic HTML and anchor links that models can cite. Keep one idea per paragraph when possible. Google BlockRank research values clarity at the passage level.

- Maintain stable claims with citations. Place numbers beside sources and name the year in body copy.

- Keep technical health high so first-stage retrieval does not fail you. Fix crawl traps, canonical conflicts, and thin duplications.

- Publish Q&A sections with succinct answers for AEO and GEO. Keep each answer useful on its own.

Suggested internal and external references

- Internal index feed for related reading

- Primary research and coverage

- BlockRank paper on arXiv with method, datasets, and efficiency notes.

- Industry explainer with deployment caveat and GitHub pointer.

- Algorithm update history hub for confirmed changes.

Frequently asked questions

Short answers follow for quick scanning. Use the linked sources for deeper reading.

What is BlockRank

It is a research method for in-context ranking that restructures an LLM’s attention to focus on query-relevant blocks within candidate documents. The goal is strong listwise ranking quality with lower compute compared to full fine-tuning rerankers.Is BlockRank part of Google Search today

There is no public confirmation that BlockRank runs in Google Search or AI Overviews as of October 2025. Treat it as research only.How does BlockRank differ from common rerankers

Classic rerankers score passages pairwise or listwise with fully dense attention over all tokens. BlockRank constrains attention across document blocks and adds an auxiliary attention objective guided by query tokens, which improves speed at higher candidate counts.Which benchmarks are reported

The paper reports results on BEIR, MS MARCO, and Natural Questions using a 7B base model with performance that matches or exceeds strong baselines alongside efficiency gains.What should content teams change right now

Focus on question clarity, evidence density, clean sectioning, and technical health. These practices help any reranker and also improve AEO and GEO results. Industry analyses through 2025 point to helpful content, intent match, and experience signals as continuing priorities.Does the 2024 documentation leak confirm anything about BlockRank

No. The leak discourse is unrelated. Use it only as background on signals and systems.}Glossary for clear coordination

- In-context ranking

- Ranking that happens inside the LLM prompt where the query and candidate documents are read together to produce an ordered list.

- Reranker

- A second-stage model that reorders initial retrieval results to improve relevance before synthesis in AI answers.

- BEIR

- A heterogeneous benchmark suite used to evaluate retrieval and reranking methods across tasks and domains.

- MS MARCO

- A large set of real user queries and passages used to evaluate passage ranking quality.

- Natural Questions

- A dataset built from Google queries with Wikipedia answers used to test retrieval and ranking.

Fact check notes with confidence

- BlockRank is presented in a 2025 arXiv preprint with method and results documented (high confidence).

- Coverage states no evidence of current production use in Google Search as of October 2025 (high confidence).

- Benchmarks include BEIR, MS MARCO, and Natural Questions on a 7B model with competitive results (high confidence)

- RAG pipelines commonly include reranking layers that influence GEO and AEO work today (medium confidence, widely reported).

Things to avoid while you watch this space

- Do not claim deployment inside Google Search without an official statement or clear technical proof.

- Do not switch strategy away from content quality and passage clarity that help every reranker.

- Do not read the 2024 documentation leak as confirmation of this research line.